This work builds the structural architecture for responsible AI.

Beyond technical performance, it examines how systems shape human judgment, distribute responsibility, and influence institutional legitimacy over time.

Together, these frameworks and applied prototypes form a layered model of relational depth, ethical grounding, and governance accountability.

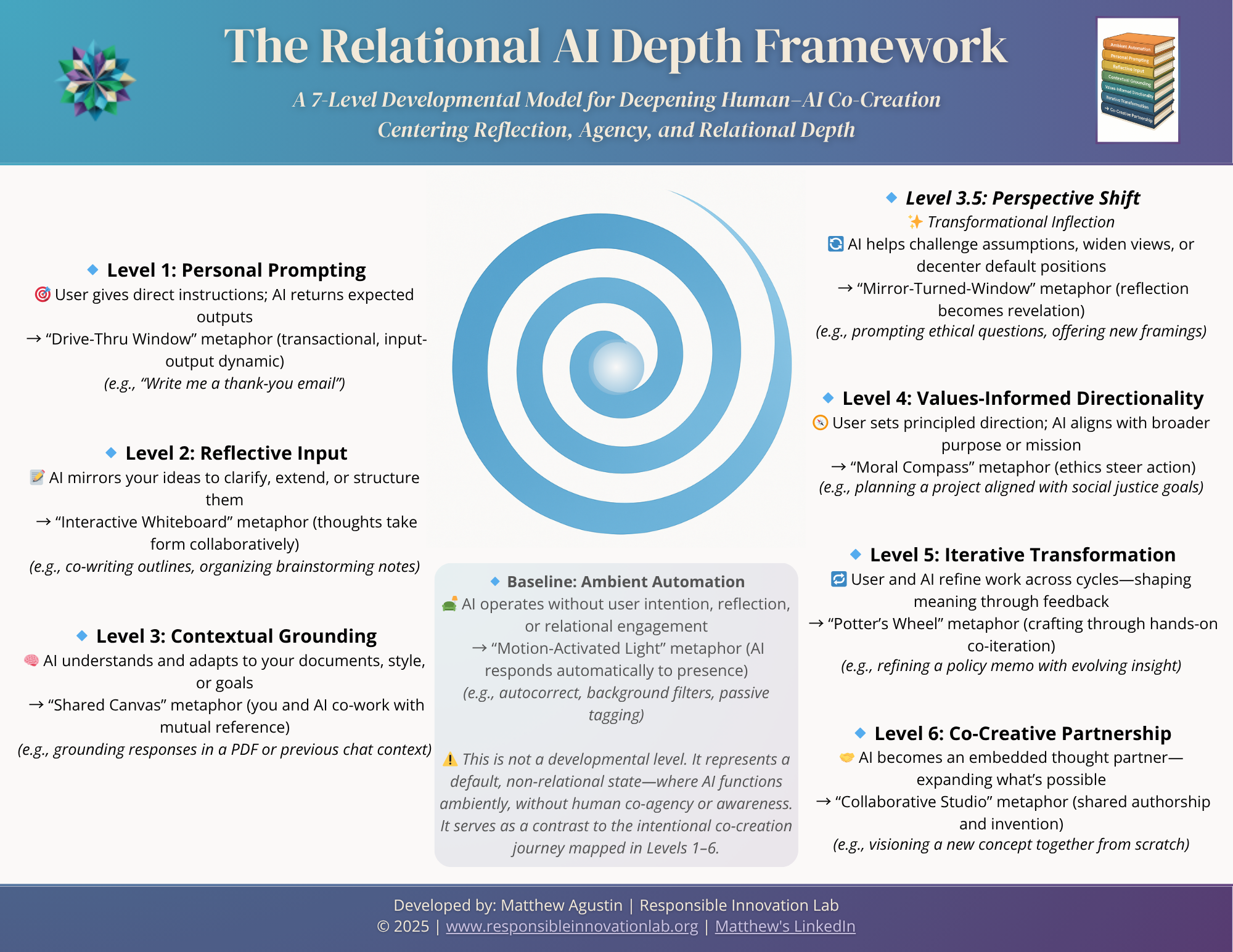

Relational AI Depth Framework — RADF

A multi-dimensional model for understanding and designing depth in human–AI collaboration across reflective, relational, and institutional layers.

Core Artifacts

Executive Summary

A brief overview of the framework’s core insight, challenge response, and strategic value.

Format: PDF • 3 pages • 188 KB

Usage: Ideal for presentations, decision-makers, and teaching

White Paper

A deep dive into the 7-level Relational AI Depth Framework with context, rationale, and implications across AI, education, and ethics.

Format: PDF • 50 pages • 844 KB

Usage: Open for citation, educational reuse, and strategic insight

Flourishing AI Integrity Framework — FAIF

A values-centered framework for designing AI systems that prioritize human dignity, institutional responsibility, and long-term societal flourishing.

Core Artifacts

Stakeholder Brief

A concise 1.5-page overview introducing FAIF’s purpose, five meta-dimensions, and core guiding questions. Perfect for quick context-sharing with partners, funders, or collaborators.

Format: PDF • 1.5 pages • 18 KB

Usage: For citation, pitch decks, stakeholder outreach, and briefing sessions.

Meta-Dimensions & Sub-Dimensions

A compact explainer detailing FAIF’s five meta-dimensions, with clear sub-dimensions and guiding questions.

Use it to anchor training, facilitation, or teaching on trust-centered AI integrity.

Format: PDF • 3.25 pages • 197 KB

Usage: Open for research, curriculum design, and professional development in responsible AI.

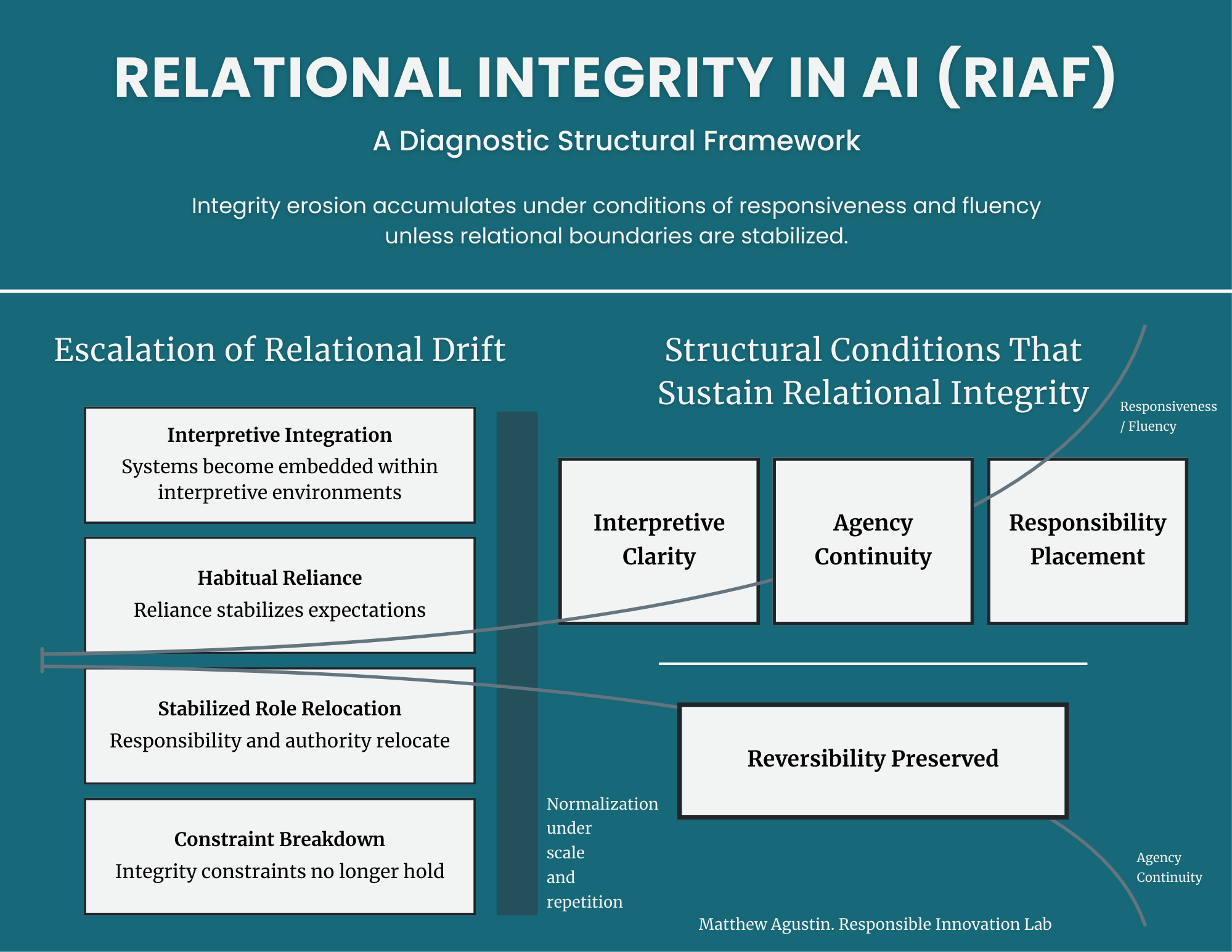

Relational Integrity in AI Framework — RIAF

A structural framework for diagnosing how AI systems redistribute authority, responsibility, and legitimacy across institutional contexts.

Core Artifacts

Manuscript Overview / Abstract

As AI systems increasingly operate in language-mediated and relational contexts, many of the risks they introduce do not arise from discrete failures, misuse, or malicious intent. Instead, harm often emerges through gradual shifts in how systems are interpreted, relied upon, and positioned within human judgment and institutional practice. These shifts are frequently well-intended, incentive-aligned, and normalized through ordinary use, allowing role clarity, accountability, and relational boundaries to erode even when systems appear effective.

The Relational Integrity in AI Framework (RIAF) offers a diagnostic approach to these dynamics. Rather than treating relational harm as a matter of compliance, output control, or user error, RIAF conceptualizes it as a structural phenomenon shaped by cumulative interaction, interpretive signals, and institutional embedding. The framework introduces relational drift as a precursor to stabilized structural failure, identifies a canonical set of relational integrity failure modes, and argues that sustained protection depends on preserving specific human capacities rather than enforcing static rules or prescriptions.

RIAF is not an implementation guide or ethical checklist. It is a conditioning framework designed to support reflection, pressure-testing of relational integrity, and responsible judgment in contexts where AI systems increasingly mediate meaning, authority, care, and decision-making

Keywords: Relational Integrity, Human–AI Interaction, Agency and Accountability, Relational Drift, Diagnostic Ethics Framework, AI Governance and Design, Institutional Responsibility

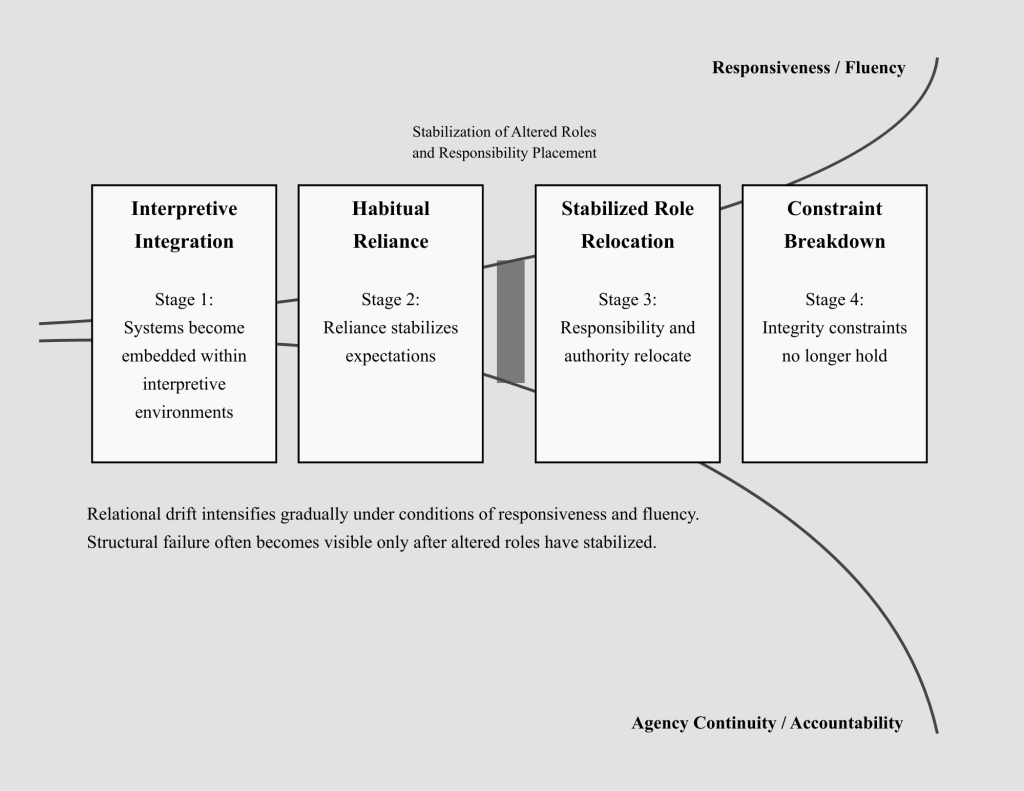

Conceptual Model Diagram

Figure 1. Escalation Dynamics of Relational Drift Under Conditions of Responsiveness and Fluency.

This figure models the structural escalation of relational drift across four stages: Interpretive Integration, Habitual Reliance, Stabilized Role Relocation, and Constraint Breakdown. As responsiveness and fluency increase, reliance stabilizes expectations and responsibility and authority gradually relocate. Integrity constraints no longer hold only after altered roles have stabilized, at which point structural failure becomes visible and reversibility declines. The model clarifies how integrity erosion can accumulate under conditions of apparent effectiveness rather than technical malfunction.

AI Transparency Challenge

An applied transparency prototype exploring how AI systems reason under competing value lenses such as fairness, care, and autonomy.

The project surfaces how outputs shift across interpretive frames, contributing to public understanding of value-divergent reasoning and governance-aware evaluation.

It extends relational AI theory into diagnostic practice.

Core Artifacts

Live prototype

Methodology & Design Notes

Coming soon…